Message boards : Number crunching : I think we should restrict work units

Message board moderation

Previous · 1 · 2 · 3 · 4 · 5 · 6 · 7 · 8 . . . 11 · Next

| Author | Message |

|---|---|

1fast6 1fast6Send message Joined: 2 Jun 06 Posts: 5 Credit: 245,858 RAC: 0 |

1fast6: Haven't you read that the projects big idea for the moment is to NOT release work all the time. Is it any sense to waste computer time for meaningless crunching? I guess it depends on your definition of meaningless crunching... all I know is I could have done SOMETHING to contribute this past week... ok, so how about, continue to release workunits that remain in "active status", until the results are validated...

do we really know that the servers are waiting for stragglers? Or, could they be waiting for their own analysis to complete in order to build the next work units based on the work completed. [/quote] heck,I dunno if they're stragglers, but the server status has looked pretty much like this all weekend... Server Status I'm just assuming there a just a few workunits hung up in limbo somewhere, just waiting for the third submission to validate the results... but maybe I'm wrong... |

|

Send message Joined: 21 May 06 Posts: 73 Credit: 8,710 RAC: 0 |

... but maybe I'm wrong... Perhaps. We all run that risk - particularly when we speculate without data. I've started another thread asking if there is any way to view the WUs. Is it possible that those WUs have really reached Quorum, and that the fact that there are no WUs available is that there is some other asynchronous work (perhaps being done within CERN - perhaps even by **humans**) that needs to complete before more work can be sent out? We really don't know why there is no work, or do we? |

David Lahr David LahrSend message Joined: 27 Dec 05 Posts: 7 Credit: 461,367 RAC: 0 |

The way I see it, there's a way to "exploit" the system to make sure you get a lions share of the work. However, this wasn't kept a secret, so if anyone wants to they can - hence there's no secret advantage being used against people. As someone said, if LHC want's results returned faster, they can modify the BOINC server settings, so no worries there. I think the motive for doing it is stupid (e.g. "it's more important for me to score points than for the work to be shared and completed faster") but now that the secret is out, the best way to get back at the originators is to do the same yourself. Then they won't be getting a tactical advantage. And if the problem gets severe enough, LHC will do something about it, and we'll all be back on the level playing field. |

|

Send message Joined: 21 May 06 Posts: 73 Credit: 8,710 RAC: 0 |

The way I see it, there's a way to "exploit" the system to make sure you get a lions share of the work. However, this wasn't kept a secret, so if anyone wants to they can - hence there's no secret advantage being used against people. It is interesting to me that some consider raising the cache (or time to connect) to be "unfair". But, raising the resource percentage to favor LHC by large factors is "ok" and even somewhat encouraged. Both have the INTENT of getting more LHC work. One is more effective than the other. One is considered "ok". While the other is considered "unfair" (by some).... Is this rational? |

Keck_Komputers Keck_KomputersSend message Joined: 1 Sep 04 Posts: 275 Credit: 2,652,452 RAC: 0 |

It is interesting to me that some consider raising the cache (or time to connect) to be "unfair". I don't think anyone considers raising the cache size to be unfair, however it is counterproductive for this project. Raising the resource share is productive for this project. This project generates work as needed and when the work is needed it is needed quickly. That is one of the reasons more copies are sent out initially here than at any other production project. A large resource share will cause a host to get work when available, and concentrate on that work so that it is returned quickly. A large cache will cause work to spend more time sitting idle on a host while other work is being processed, delaying it's return to the project. So as you can see it is very rational to encourage a high resource share and a small cache. BOINC WIKI   BOINCing since 2002/12/8 |

|

Send message Joined: 1 Sep 04 Posts: 14 Credit: 3,857 RAC: 0 |

"I don't think anyone considers raising the cache size to be unfair, however it is counterproductive for this project" I DO! I thinks its totally unfair... just cuz the option is there, doesent make it right for all to change it... it is there for a group of puters on ones network/team, not really meant for the single puter user, cuz you shouldent need it if everyone wasent getting 3-5 days of cache at a time.. the work would be returned faster if more puters were able to process the units, the problem is were WAITING FOR THE GREEDY ONES TO FINISH THE UNITS THEY ALREADY HAVE, cuz they got like what 10- 15 units. having 10 units sitting waiting to be processed while others are dry is sooo stupid. ive had 1 or 2 units of crunching in like two weeks... its always outta work cuz of you greedy ones. so how is letting a majority of your community run without units for like 6 outta 7 days a week, be productive? and also why accept anymore user to join? if you dont have the work for the ones already here. |

|

Send message Joined: 1 Sep 04 Posts: 14 Credit: 3,857 RAC: 0 |

"Is it possible that those WUs have really reached Quorum, and that the fact that there are no WUs available is that there is some other asynchronous work (perhaps being done within CERN - perhaps even by **humans**) that needs to complete before more work can be sent out? We really don't know why there is no work, or do we?" good point, it may not always be greedy ones taking all the units, there are rare times that like he said above... might be something going on we dont see. |

|

Send message Joined: 19 May 06 Posts: 20 Credit: 297,111 RAC: 0 |

I don't think anyone considers raising the cache size to be unfair, however it is counterproductive for this project. A large cache is fair in the sense that it's not against the rules, but as you said, it's counterproductive, and at least some people think it's unfair, probably because of this. It's kind of like saying an action is not illegal, but it is immoral. You can still do it because you're not breaking any rules/laws, but not everyone is going to like it or agree with it. They can't do much about it though either. As I understand the BOINC system, you're right about how the settings work, so I agree. A large resource share tells your computer to spend a lot of time on LHC. A large cache tells you computer to grab a lot of work for LHC. The idea of the first is to complete work for LHC faster by not splitting your time among multiple projects when LHC has work to do. Getting more work units as well is a result of the setting, but not the primary intention. So this is productive. The idea of the second is to grab a lot of work so you have it for a long period of time. There are some good reasons for doing this, but getting lots of units so you get lots of credit is not one of them. You slow down the project in this case by sitting on work that other people could have been doing. So this is counterproductive for the project. I suppose there could also be something going on behind the scenes that we're not aware of, but would this site still list work units as in progress, not returned, if they weren't actually out in the hands of BOINC users? I suspect not, but I don't know for sure, that's why I'm asking. |

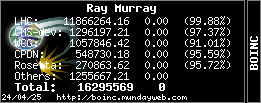

Ray Murray Ray MurraySend message Joined: 29 Sep 04 Posts: 281 Credit: 11,888,115 RAC: 0 |

...the fact that there are no WUs available is that there is some other asynchronous work (perhaps being done within CERN - perhaps even by **humans**) that needs to complete before more work can be sent out? At the start of the project there was always plenty of work from the initial studies but since that is complete, there is less for us to do, but more for those on site, doing the actual building. My understanding, although I am sure somebody will correct me, is that the Accelerator, all 27 kilometers of it (that's more than 16 miles for non-europeans), has to be designed, built and calibrated a section at a time. This means that they cannot send out the simulation work for section F for us to crunch before they have sections A through E physically completed and tested. The project isn't just about numbers, there are magnets to position and thousands of miles of associated cabling to lay. It is therefore no wonder that work is sporadic, while the physical catches up with the theoretical. While work is available, I have my cache set to 3 days, knowing that my machine will complete all that work in little over 2 days. I suspend work on all my other projects so that my machine works exclusively on LHC when it can. This usually means that I only get two or rarely three batches of WUs before they run out, but I'm happy that the work is returned within a couple of days and certainly well within deadline. If that makes me a credit whore then colour me guilty. I do agree, however, that it isn't sensible to ask for 10 days work and then take 3 weeks to complete it. This clearly just causes annoyance to those who can't get any work at all. I also agree that perhaps the Account Creation should be switched off as there is clearly already a wide enough user base, and maybe shorter deadlines would limit the number of hoarded WUs, allowing them to be sent out again earlier if they timeout. The problem there is that there doesn't appear to be an Admin just now, until they can pressgang one of their students.

|

|

Send message Joined: 21 May 06 Posts: 73 Credit: 8,710 RAC: 0 |

Perhaps this is a Social or Psychological experiment and not one of Physics... I just see the paper on "Volunteer Responses to Contrived Shortages in an Altruisic Computing Environment..." Does anyone have any insight into how CERN intended to build and configure the LHC **before** they joined BOINC? |

|

Send message Joined: 2 Sep 04 Posts: 28 Credit: 44,344 RAC: 0 |

I've started another thread asking if there is any way to view the WUs. Why do I get the feeling that you'd like to see the wu's, so that you can try and associate them with a user whom you may believe can be pressured into returning them faster? I smell a witchhunt brewing. :( I no longer micromanage my systems, I rely on the infrastructure to work as designed. Granted, in the early days under certain conditions (quite a few actually :) the infrastructure failed to work properly. Those days are pretty much history, rendering micromanaging unnecessary. The system has worked well enough to date. If the project team thought there was a flaw, or the scientists were clammering for answers earlier, they may be inclined to address a perceived "problem". IMHO, impatient users are not a problem and the system doesn't look broke...Does it really need fixing? |

|

Send message Joined: 1 Sep 04 Posts: 14 Credit: 3,857 RAC: 0 |

dont need to go any farther then to look at the top crunchers to see who is hoarding all the work units. kinna simple really :) no witch hunt neccessary :) well at least there was a top users selection on seti at home so I may be wrong havent looked on here yet. and its only a small amount of people that are changing thier caches like the 15-20 units were waiting on right now. most people just install and use, and never even come into forums or to go to change prefrences. |

|

Send message Joined: 1 Sep 04 Posts: 14 Credit: 3,857 RAC: 0 |

69,624 total LHC@home users... isnt the work unit total like half that anyways? if so seems more users then even work units generated... but I cant remember the starting number of workunits? ok got this off the LHC@home alpha site... so they have at least this many units... too bad acount creation is closed lol Server Status Up, 143681 workunits to crunch 38 concurrent connections http://lhcathome-alpha.cern.ch/ |

|

Send message Joined: 19 May 06 Posts: 20 Credit: 297,111 RAC: 0 |

69,624 total LHC@home users... isnt the work unit total like half that anyways? The "Work to be done!" thread has a few numbers. I think this most recent batch was around 67000 units, but the one before this was about 85000 units. So I guess it's kind of variable. |

Steve Cressman Steve CressmanSend message Joined: 28 Sep 04 Posts: 47 Credit: 6,394 RAC: 0 |

|

|

Send message Joined: 1 Sep 04 Posts: 14 Credit: 3,857 RAC: 0 |

I got that total from a page that listed every boinc user total... so it may or maynot be authentic as I dont know who made it. |

|

Send message Joined: 1 Sep 04 Posts: 14 Credit: 3,857 RAC: 0 |

wow this is taking forever for you guys to finsh your over cached WU's... what a joke. |

|

Send message Joined: 4 Sep 05 Posts: 112 Credit: 2,319,063 RAC: 8 |

wow this is taking forever for you guys to finsh your over cached WU's... What over cache? These ones will be lost or abandoned WU's. Redundant now as the quorums have well and truly been met. Any in that category are orphaned in the DB. Know problem and no fix yet. I see you have your computers hidden so we can't tell what you've done, hmmm. Click here to join the #1 Aussie Alliance on LHC. |

|

Send message Joined: 29 Nov 05 Posts: 9 Credit: 1,266,935 RAC: 0 |

I not only had 2 days of work left when LHC ran out of work, I also suspend all other projects on all machines in the duration that LHC has work to offer, then turned them back on when it ran dry, so it takes mee 4-5 days to clear the cached WUs out. The INCA computer (the 3.4ghz with 2GB RAM) is not always connected so that one has the worst lagtime on WU-return. That machine i grub it all up from all projects (I only connect it regularly when LHC has work) and wait until 1 day before the shortest deadline to connect it again. Thats why that machine has the most CPDN and CPDN-Seasonal credits. INCA is 10-days on all projects (home profile). The other 2 machines are work profile (1-day cache) on all projects but home for LHC for expert scarce-WU pigging. The 1.7GHZ machine is my file sharing (Emule) machine and it keeps the Verizon Wireless CellPhone Modem (I'm 99.999% bandwidth hog on VZW using 30-60GB per month on a traffic-shaped 14Kup/14down low prio - 5-7K daytime - bandwidth) . The 1GB 3.4MHZ is me using BOINC at work. If LHC always had work then I wouldn't be so greedy :) And I'd probably be at 85th percentile for RAC/Total and not 99.5% RAC and 96% total :) Check out this: I pigged about 4600 credits in the past 3 work batches It is important to MaxCache LHC becuase it takes only 18-30 hours for them to run out of work once they put it up (looks like they put up 80000-150000 WUs at a time).  My... LinkSite | Blog | Pictures |

Jack H Jack HSend message Joined: 22 Dec 05 Posts: 27 Credit: 46,565 RAC: 0 |

|

©2026 CERN